Senior Engineers Should Not Be Vibe Coding

The new software/senior engineer skill is not writing every line. It is knowing which lines, patterns, and tradeoffs deserve human taste.

As I encounter more and more commentary about the rise of vibe coding, I wanted to share a real example of how I use Claude, Codex, and similar tools in my day-to-day. Not as a “build everything for me” machine, but as an aware sounding board for architecture and design decisions. To me, that is where the real line between vibe coding and agentic software co-engineering resides.

The takeaway: the tools are brilliant, truly remarkable in their ability to reason and iterate toward a tested and workable product. But applications are more than software. They are data architecture, systems engineering, product thinking, and user experience. There is still plenty of room for elegance — and better outcomes — when you keep your design iterations tight and a stable end product in mind. The full transcript is a little daunting so here’s a less technical brief…

TL;DR

(Claude running on Opus 4.7 High)

Ryan: I’m surprised this bug surfaced after so many iterations that should have prevented it. Let’s take a step back and analyze the architecture.

Claude: I found the problem. Here’s a more durable and future-proof architecture, along with new patterns and tables.

Ryan: That would probably work, but it would also break the user interface. What if we do this much simpler thing instead?

Claude (thinking): The user proposed a simpler path. At first glance it seems too simple, but… wait. There’s nuance here. Actually, this is cleaner. And more durable. And better than what I proposed.

Claude (responding): I like your idea better than mine. Here’s what I was overcomplicating, plus one small tweak to improve it. Want me to build it?

Ryan: (paraphrasing) Let’s goooo.

Is posting this a little bit of an ego trip?

Probably.

It is validating to watch a model produce a plausible solution, spot the hidden costs, and steer it toward a cleaner one. But that’s also the point: this is the kind of interaction that separates vibe coders from software engineers now.

The differentiator is no longer who can generate code fastest. It is who can recognize when a solution is structurally wrong, overfit, or inelegant — and direct it toward something better.

As someone who hires software developers into roles, this is the awareness I’m looking for when people use co-authoring tools. Rather than “do this work for me,” I’m looking for “do this work with me.” And the difference isn’t hard to spot.

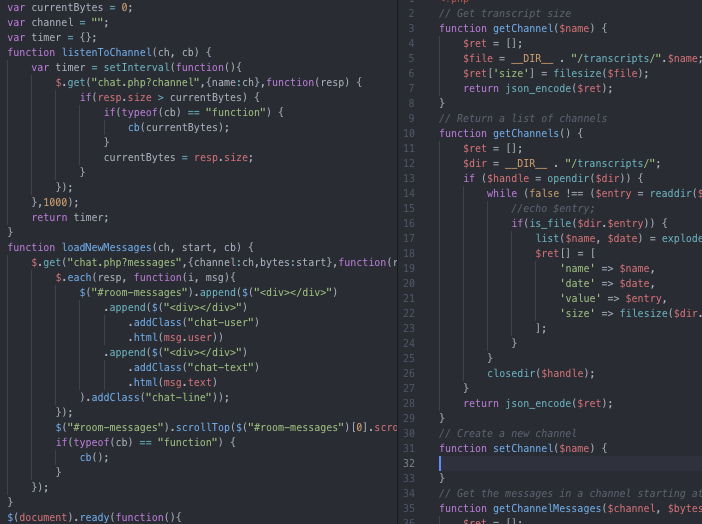

If you really want to get into my head, here’s an excerpt from the transcript. Some typos have been kept so you know its real. Five stars if you make it to the end… and maybe even a job offer if you understand all of it. 😉

Ryan

In the latest test, the synthesize-user-turn ran even though I had manually entered a “nudge” message well before the start. Future autonomous agents turns responded to the synthesized message and the manually entered message remained in waiting/ignored.

Take a step back and look at our strategy as patterns rather than flow control and see if you can find design friction and a better pattern to inject interrupt messages into the context during the spark run. We’ll want to reuse this pattern later so other services and users can insert notifications into the message stream.

Claude

The bug you saw

The “skip synth when last row is user” check is a peephole, not an invariant. A single autonomous turn runs many model rounds (see ai/agents/auto.py) — the while True at line 44 keeps cycling until the model stops calling tools). Within that one call to stream_autonomous_session_events, the in-memory messages list is loaded once and mutated in place; the DB is being written to from inside that loop (tool_call / tool_response / assistant_response rows via stream_persisted_events), but the agent never re-reads the DB.

Your nudge is in the DB, but because tool_calls / tool_responses / assistant_response landed after it, the last-row check votes for synth, and the next turn’s model anchors on the fresher synth user. The nudge stays in “waiting” forever (or until the optimizer summarizes it away).

Why the current design has friction

The current strategy is a flow-control branch based on a DB peephole: “look at the newest row; if it happens to be a user, assume there’s a pending human input.” That mixes three concerns into one check:

Where did this row come from? (agent vs. external)

Has the agent seen it yet in a turn that started after it arrived?

Should the inner-voice synthesis run this cycle?

None of those are actually “last row type.” You’re overloading position-in-a-log to carry a provenance flag and a delivery flag. That’s why any agent activity after the injection erases the signal, and why it won’t generalize: if the optimizer wanted to nudge (“your fine-tune finished”), or a cron job wanted to inject (“it’s morning, review your day”), or another user wanted to message — each would fight the same ordering race.

It’s also implicit in another way: nudge_spark broadcasts a MessagePersistedEvent directly to the SSE broker bypassing the worker, and relies on the worker to “notice” the row later. There’s no acknowledgement that the row was actually delivered to the agent. The frontend’s “graduation on TurnStartedEvent after persist” is the only observer of delivery, and it’s observing a proxy (a turn started) rather than a fact (the agent received this).

The pattern I’d propose: an inbox

Separate deposit from delivery. External signals (human, service, cron, webhook, future notifications) post into an inbox. The autonomous worker drains the inbox at the start of each cycle, promoting each entry into a transcript row and stamping it delivered. Synth runs only when the drain is empty.

Why this is the right shape

Provenance is a column, not a position. source = ‘user’ | ‘service:optimizer’ | ‘cron:morning’ | …. Anyone inspecting the inbox knows who posted what.

Delivery is a fact, not a guess. delivered_at / delivered_message_id are set exactly when the worker promotes an inbox entry into a transcript row. No race with mid-turn appends. The worker can append 50 tool_response rows after the nudge lands and the inbox row is still visibly pending.

Drain is atomic and ordered. Multiple posts between turns get drained as a batch in arrival order. No “which one wins” question.

Synth stays a one-liner. skip_synth = any_drained_this_cycle. Clean boolean, no peephole.

Extensible by construction. Adding a new injector is a single call to InboxRepository.post(session_id, source=…, role=…, content=…). Cron, optimizer “your weights finished,” a collaborator’s message, a webhook from an external system — all identical.

UI gets a real contract. POST /chat/sparks/{id}/nudge returns {inbox_id} immediately; when the worker drains, it publishes an InboxDeliveredEvent {inbox_id, message_id}. The frontend “pending bubble” graduates on that exact event, no more TurnStartedEvent proxy bookkeeping.

Optional wake-up for free. Posting sends a pg_notify on a spark_inbox channel; the worker’s cooldown becomes “sleep 2s OR wake on inbox signal” — sub-second delivery instead of up-to-2s latency. No separate queue infrastructure.

What goes away

The last_message_type heuristic and its repo method. TurnStartedEvent and the frontend’s “sawTurnStart” tracking — replaced by the explicit InboxDeliveredEvent correlation on inbox id.

nudge_spark writing to the messages table directly and publishing its own MessagePersistedEvent — the worker becomes the sole writer of transcript rows, which tightens the invariants on the messages table.

Shape of the change (for scope calibration)

New table inbox_messages(id, session_id, source, role, content, created_at, delivered_at, delivered_message_id).

New InboxRepository with post, list_pending(session_id), mark_delivered(inbox_id, message_id).

Worker cycle top: drained = drain_inbox(session_id); for each, append to messages, mark delivered, publish InboxDeliveredEvent. If drained was non-empty, skip synth.

nudge_spark posts to inbox + pg_notify(‘spark_inbox’, spark_id) + returns {inbox_id}.

Worker sleep replaced by asyncio.wait of (cooldown timer, inbox-notify listener).

New SSE event inbox_delivered {inbox_id, message_id}. Frontend reconciles pending bubble by inbox_id.

Remove TurnStartedEvent, remove last_message_type.

…

Ryan

The turn-start-event is used so that we have a signal to the frontend that displays a tool being triggered before a long input step is returned, so the user isn’t in the dark wondering what’s happening. I wouldn’t replace it.

I like this idea, but could we make it simpler? If we add provenance/source and “seen_at” to the messages table and inject any new message/nofication as system messages that both the autonomous agent and synthetic-turn agents see in their message history naturally, then we don’t have to try and replace or substitute messages. Unseen system messages become interrupts that get checked for at the start of eact turn, and before any next tool call (except for maybe tool responses).

What do you think?

Claude [thought]:

The user is proposing a different approach: keep TurnStartedEvent for its UI purpose, add source and seen_at columns to the messages table instead of a separate inbox, and treat external injections as system messages—though I need to clarify what message types are currently supported since the list seems cut off.

Both agents would see these system messages naturally in conversation context, and unseen ones (where seen_at IS NULL) act as interrupts checked at turn start and between model rounds. The main advantages are simplicity—just two nullable columns—and a unified transcript where everything lives in messages. The main concern is whether the schema allows a new system type, or if I should use source for provenance while keeping type as user for human nudges to preserve natural conversation flow.

Actually, rethinking this: the user explicitly wants external injections as system messages, which makes sense architecturally. In the spark model, there’s no traditional “user” partner—the agent is autonomous and the synthetic messages are its inner voice. Injecting a human nudge as another “user” row would blur that conceptual boundary. Modeling it as system with a source column to distinguish “spark:operator” from “service:optimizer” is cleaner—it’s an out-of-band harness-level override, not a conversation turn.

For the autonomous loop, each iteration checks for unseen system messages at the start and before each model round (except when waiting on a tool response), then runs the model and processes the result.

The key constraint is the tool_call/tool_response pairing: OpenAI’s API requires them to be adjacent, so splicing a system message between them would break the contract. The safe injection point is after all pending tool_responses land and before the next model invocation—that’s when the message list is consistent and the pairing is complete.

For the “seen_at” tracking, I need to handle the fact that the autonomous loop keeps the messages list in memory across rounds. At the start of each cycle, I load messages from the database (including any unseen system rows) and mark them seen, then the model processes them. But new system messages might arrive mid-cycle, so at the top of the next round I need to re-query for any new unseen rows, splice them into the in-memory list, and mark those seen too.

For the continue synth agent, I’m wondering whether unseen system messages should interrupt the synthesis entirely or just get passed as additional context. The user’s language about “interrupts that get checked for at the start of each turn” suggests the former — if there’s an unseen system message, it takes priority and the autonomous agent responds to it directly rather than running the synth.

But actually, the cleaner approach might be to always run synth with the system message in view, so the inner voice can naturally incorporate the operator’s prompt into the next user turn. That said, there’s a risk the synth layer dilutes the interrupt’s directness. The safest call is probably to skip synth when an unseen system message arrives and let the autonomous agent respond to it unfiltered.

Wait, I need to think about how the API actually handles this. System messages are instructions, not turns that demand a response—if the context ends with a system message and no user turn, the agent might not know what to do. The real issue is that synth generates the user turn that drives the autonomous loop. If I skip synth when a system message arrives, the autonomous agent runs with no trailing user turn, which is awkward. But if I always run synth, it naturally sees the system message and produces a proper user turn for the autonomous agent to respond to. That’s actually the cleaner design—let both agents see the system message naturally through the conversation context, and let synth do its job of bridging to the next user turn.

Now I’m reconsidering what “unseen system messages become interrupts” actually means. The user isn’t saying to skip synth—they’re saying the code should actively check for newly-arrived unseen system messages at turn boundaries and between tool-call rounds, then splice them into the in-memory conversation list. So the check is about timing and visibility, not about replacing the normal flow. External injections come in as system messages with metadata like source and seen_at, and the agent gets a chance to see them before proceeding.

The elegant part is that system messages are instructions from the harness, not conversation turns—so even if synth runs and appends another user message after a system injection, the autonomous agent sees both the system directive and the synth output in context, and a well-prompted agent will naturally respond to the system instruction. This sidesteps the whole “skip synth” question because system messages don’t compete with turns; they’re out-of-band guidance that both agents can incorporate.

For implementation, I need to extend the schema to support system as a message type, add source and seen_at columns for tracking, then update the conversation builders in both the chat and autonomous services to handle system messages as role=”system” without role-swapping. The mid-turn splice logic should check for unseen system rows between model rounds and inject them into the in-memory message list, with a clean callback abstraction that the autonomous loop calls at each iteration to refresh the inbox.

The nudge_spark service becomes simpler—just insert a system message with source=”spark:operator” instead of relying on the skip-synth heuristic. I can remove the last_message_type check entirely since synthesis always runs now, and the frontend’s pending nudge graduation logic adapts to the new system message flow.

For the UI rendering, there’s a tension: if external nudges are system messages, they need distinct styling, but the user expects the “light blue → dark blue” graduation effect. The cleanest approach is to render system messages with source=”user” as user-style bubbles on the frontend—keeping the backend semantic separate from the UI presentation. This is a UX decision worth flagging, but the unified transcript approach is clearly better than my original idea since it avoids a new table, maintains one source of truth, and lets the existing conversation rebuild logic handle it naturally. The user is asking for my opinion, so I should validate their approach, explain why it’s cleaner than my original inbox idea, flag the key design decisions that still need resolving, and offer to build it out.

Claude [Response]

I like it better than the inbox. Two things I was overcomplicating:

-

A second table is overkill. The messages table is already the ordered transcript; an inbox duplicates the ordering semantics and forces a two-phase move. Provenance + seen flag on the existing row reuses everything we already built for persistence, SSE, reload, and compaction.

-

The skip_synth branch disappears entirely. If the interrupt enters the transcript as a real row with the right role, both the synth and the autonomous turn pick it up through the normal _build_conversation / _build_continue conversation paths. No branching, no race, no peephole. The “problem” of the synth running on top of a nudge stops being a problem — the synth sees the nudge as prior context and naturally writes around it instead of replacing it.

-

`system` is the right role choice. The agent has no human correspondent in the classical chat sense — “user” turns are the inner voice. An operator or service injection is out-of-band harness input, which is exactly what system means to the model. source carries “who sent this” (operator, service, cron) so the prompt can render it as [operator] … and the model knows whose instruction it is.

-

Mid-turn splice point is clean. stream_auto_events has exactly one safe splice point per iteration: top of the while True after compact_messages and before run_model_round. At that point the in-memory messages list is guaranteed to have every issued tool_call paired with its tool_response (OpenAI-compatible APIs reject a splice between them). That’s also “before the next tool call, except tool responses” in your wording.

A few things I want to nail down before I plan:

[there’s a plan, but I think the point is overmade]

…

I think any senior developer would agree… this is about the most rewarding experience you can have in iterative software development. Real decision making, informed option weighing, true product awareness and a launch point to build the thing you intend, with confidence knowing the model will build the right thing.

What this was actually about

I’m building a harness called Spark that lets open-weight models research on the internet and fine-tune themselves around their own goals, or a user’s or operator’s goals (more on that to come).

This particular interaction was with Claude Opus 4.7, though in practice I switch between Claude, Codex, Cursor, and built-in models depending on token cost, verification effort, and what kind of reasoning I need.

If anyone’s interested, I can write more about either the Spark architecture itself or how I think about working with these systems as a senior engineer.

Thanks for making it all the way down here! I owe you a drink.

0 Comments